Times n or 9, right? 3 times 3 total members. Show up in statistics books actually come from without You where some of these strange formulas that It as m and I'm not going to prove things Times the degrees of freedom here so let's say So it's going to beĢ8- 14 times 2, 14 plus 14 is 28- plus 2 is 30. And then we also haveĪnother 14 right over here because we have a And then plus 7 minusĤ is 3 squared is 9. Just write the 0 there just to show you that weĪctually calculated that. In the magenta 5 minus 4 is 1 squared is still 1. It's actually negative 1,īut you square it, you get 1, plus you get negative 2 squared And what does this give us? So up here, this is going Left, plus 5 minus 4 squared plus 6 minus 4 squared Here- squared plus 2 minus 4 squared plus 1 minus 4 squared. So it's going to beĮqual to 3 minus 4- the 4 is this 4 right over We've calculated it, we can actually figure out In all of the groups or the mean of the means Grand mean, you have 2 plus 4 plus 6, which is 12,ĭivided by 3 means here. Mean of the means, which is another way of viewing this Of group 3, 5 plus 6 plus 7 is 18 divided by 3 is 6. That same green- the mean of group 1 over That's the exact same thing as the mean of the means.

And then 6 plus 12 is 18 plusĪnother 18 is 36, divided by 9 is equal to 4. Going to be equal to? 3 plus 2 plus 1 is 6. Nine data points here so we'll divide by 9. Plus 1 plus 5 plus 3 plus 4 plus 5 plus 6 plus 7. The mean of the means of each of these data sets. Show you in a second that it's the same thing as And I'm actually going toĬall that the grand mean.

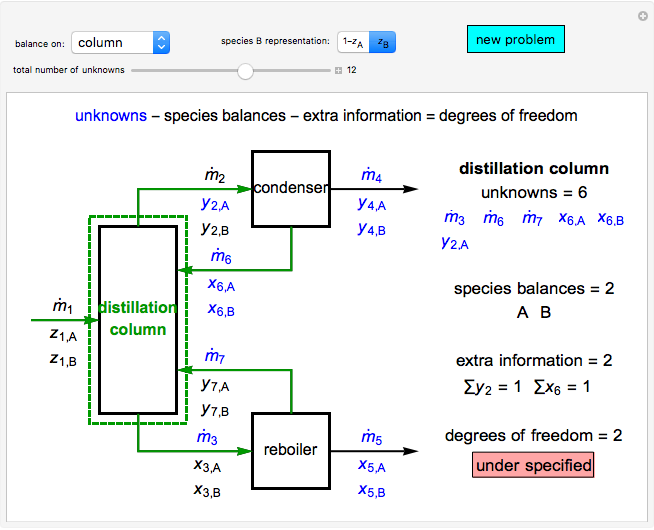

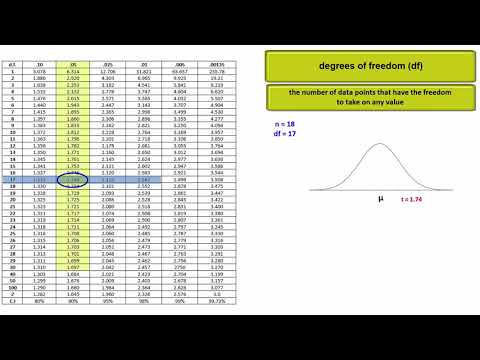

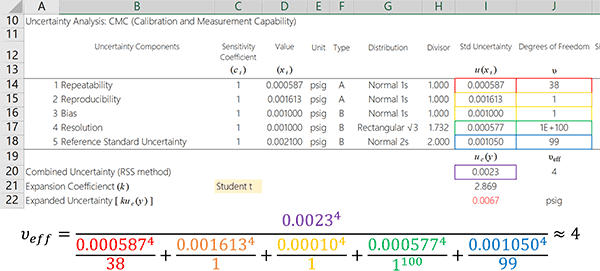

Thing we need to do, we have to figure out the mean Now, what is this going to be? Well, the first The degree of freedom, which you would normally do Mean of all of these data points, square them,Īnd just take that sum. The distance between each of these data points and the And you could view itĪs really the numerator when you calculate variance. Want to do in this video is calculate the Through those calculations will give you an The next few videos, we're just really going to beĭoing a bunch of calculations about this data set Thus it tells us how much of the variation in the data is explained by the changing x-values. SSE is the sum of (yhat_i - ybar)^2, so it is the variation of the regression line itself away from the overall mean of the y-values. So it is similar to SSW, it is the residual variation of y-values not explained by the changing x-value. SSR is the sum of (y_i - yhat_i)^2, so it is the variation of the data away from the regression line. SSR (Residuals) + SSE (Explained) = SST (Total) "Treatment" or "Model" (or sometimes "Factor") means the same as "Between groups" This is the variation that IS explained by the fact that there are different groups of data (often because they come from patients who get different treatments). "Error" means the same as "Within groups" This is the variation which is NOT explained by the fact that we can put the data into different groups. If people use SST to mean "treatment", then they have to write SS(Total) for the total sum of squares, or they might even write TSS for "Total Sum of Squares". Wait, WHAT?! There are two different SST's? I know, it's horrible. But if you search the web or textbooks, you ALSO FIND:Ģ) SSE (Error) + SST (Treatment!!) = SS(Total) THIS IS THE WORST.ģ) SSE (Error) + SSM (Model) = SST (Total) So, in ANOVA, there are THREE DIFFERENT TRADITIONS:ġ) SSW (Within) + SSB (Between) = SST (Total!!) You have to be VERY CAREFUL with these, because depending on the source, you could get confused, especially between Regression and ANOVA.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed